7 Social Epistemology

William D. Rowley

Chapter Learning Outcomes

Upon completion of this chapter, readers will be able to:

- Discuss multiple ways in which social interactions affect the epistemic status of our beliefs.

- Differentiate between theories of testimonial justification.

- Explain the problem of peer disagreement and how it emerges from common intuitions about everyday situations.

- Compare and contrast solutions to the problems of testimonial justification and peer disagreement.

Introduction: What Is Social Epistemology?

Human beings are social animals. We live in networks of interdependence. We depend on each other for many things—including the truth. True beliefs keep us from eating poisonous mushrooms, getting shocked by live circuits, and getting into car accidents. The need to transmit true beliefs is pressing because none of us individually can get all the true beliefs we need on our own. But we know that not everyone tells the truth. Therefore, our many-dimensioned dependence on each other for our beliefs raises epistemological questions. Social epistemology (SE) is the study of how social relationships and interactions affect the epistemic properties of individuals and groups.

This chapter will focus on two important issues in SE: the epistemology of testimony and peer disagreement. The epistemology of testimony is central to SE because, without what others tell us about the world, we would know very little about it. We would be almost entirely ignorant of history, science, and current affairs—not to mention the inner lives of others. Philosophers refer to this telling as testimony—whether it takes the form of speech, text, or something else (Lackey 2006).

Though there are many questions worth asking, the place to start is by wondering:

Under what conditions does testimony confer positive epistemic status on its content?

I will focus on our justification for believing testimony. However, we may hope that better understanding testimonial justification will help us understand testimonial knowledge as well.[1]

Testimony is not the only way in which others affect what we believe. We can find out how the world is indirectly, by seeing whether others agree or disagree with us or with each other. For this reason, we seek out and value second opinions as a check on our fallible sources. But we sometimes seem to dismiss disagreement. Many of our strongly held beliefs—philosophical, religious, or political—are controversial, and we know it. This, too, presents an epistemological puzzle. To lead us into the epistemology of disagreement, we will ask:

What is the epistemically rational response to discovering that someone disagrees with us?

Epistemological questions about testimony and disagreement are core issues in social epistemology and will be the focus of this chapter. The following social epistemological issues will not be covered, but may still interest the reader:

- Collective epistemology: We speak of groups as having intentions and beliefs.[2] When are the beliefs of groups justified or knowledge?[3]

- Agnotology: Sometimes individuals or groups have an interest in others being ignorant of some truth. How do propagandists, purveyors of fake news, and others exploit communication to render their targets ignorant?[4]

- Epistemic injustice: Not all members of a communication network are treated equally. What are the epistemic consequences of these inequalities?[5]

- Epistemic democracy: Can votes be construed as testimony about the best candidate or policy? Is collective opinion more likely to yield truth than individual opinion? Can democracy be justified on such epistemic grounds?[6]

Oddly enough, Western philosophy has historically neglected social epistemology. Individual pursuit of truth has generally been held up as a check on the fallibility of social sources of information, whether in the classical[7] or modern eras.[8] Interestingly, outside of the West, especially in Indian philosophy, there has been lively development of social epistemology.[9]

Social epistemology has taken on new urgency in light of the rapid changes brought on by new technology. Peer-reviewed research of the highest quality can be freely accessed in a matter of moments—as can conspiracy theories, radical manifestos, and celebrity medical tips.

Testimony as a Source of Knowledge and Justified Belief

What people tell us is critical for our understanding of the world. It furnishes us with beliefs, many of which we are sometimes happy to call “knowledge.” Still, how can this be when deception (or other error) can rarely be ruled out? Why think that beliefs based on the testimony of others are ever justified?

A. Reductionism

One answer is that we learn that (some) testimony is worthy of belief. A spoken or written word is an artifact or event in the world. Perhaps testimony justifies belief by our learning that testimony correlates with truth. This seems to be what Scottish philosopher David Hume (1711–1776) had in mind when he wrote that our assurance of testimony “is derived from no other principle than our observation of the veracity of human testimony, and of the usual conformity of facts to the reports of witnesses” (Hume [1777] 1993, 74).

One way of understanding Hume is this: similar to how we’ve learned that smoke is caused by fire, we’ve also learned through observation that testimony tends to be true. Testimony is evidence only because we have inductive evidence based on other kinds of evidence (observation and memory, especially). In effect, testimonial justification reduces to other forms of justification.

We can formulate (testimonial) reductionism as follows: you are justified in believing some S’s testimony that p, if and only if:

- You receive S’s testimony that p (you hear, read, or otherwise come to know about it and understand that S’s testimony means that p);

- You have (broadly) inductive evidence based on observation for the reliability of S’s testimony that p; and,

- p is not defeated by other evidence you have.

Thus, according to reductionism, we are justified in believing someone’s testimony only if we have testimony-independent evidence (e.g., sensation, introspection, or memories of sensation or introspection) for believing them.

Reductionism looks like a promising way of answering our question about the conditions of justified testimonial belief. It comports well with reflective common sense. If we know someone to be especially honest and knowledgeable about a topic, we have stronger than usual justification to believe their testimony. On the other hand, if we know someone is prone to lying, we are usually not justified in believing their testimony. Reductionism seems to justify a commonsensical level of skepticism about testimony—but not too much skepticism.

Box 1 – Solving the Missing Evidence Puzzle

The puzzle: Testimony does not work by evidence transmission. Your telling me that p does not give me your evidence for p. But I still rely on your evidence in some sense. How can I rely on your evidence without having it?

A two-step solution for reductionists:

- Step 1. We have inductive evidence that people tend to follow norms of communication that require knowledge or evidence when testifying that p. So, your testimony that p gives me evidence that you have some evidence in support of p (even if I don’t know what your evidence is).

- Step 2. Apply the evidence of evidence principle (EEP), which says, roughly, that whenever I have some evidence that you have some evidence in support of p, then I have some evidence in support of p. (And so, this evidence about you would enable me to reason to the conclusion that p.)

Together, Step 1 and Step 2 imply that your testimony that p gives me evidence in support of p (without giving me your evidence for p) (Rowley 2016).

B. Objecting to Reductionism

Thomas Reid (1710–1796), Hume’s contemporary and fellow Scot, was critical of reductionism. Let’s consider two “Reidian” objections to reductionism.

The first problem concerns the reliance of reductionism on individual observation. For reductionism, the only evidence anyone can rely on to justify believing testimony is their own observation. But how many of us can really reconstruct a good inductive argument from our own experience alone, relying on nothing that we have been told, for thinking that an individual’s testimony is likely to be true? For Reid, attempting this would be hopeless, claiming that, “most men would be unable to find reasons for believing the thousandth part of what is told them” (Reid [1764] 2000, 194). If Reid is right, reductionism implies that we are rarely justified in believing testimony. It doesn’t follow that reductionism is false, of course. Maybe we should be skeptics about most of what we are told. But most reductionists are not skeptics about testimony. Thus, Reid’s argument is a powerful objection to non-skeptical reductionism.[10] Call this the not enough evidence objection (NEEO).[11]

Another problem inspired by Reid concerns the beliefs of small children. This objection is called the infant/child objection (ICO).[12] This objection proceeds from two observations. First, very young children lack many of the concepts adults have, and have far fewer experiences than adults on which to base their beliefs. Second, it is obvious that young children have justified testimonial beliefs. However, the cognitive innocence of very young children makes it very hard to see how, according to reductionism, they could have testimonially justified beliefs. So, the ICO urges, reductionism is false.

C. Non-Reductionism

Can testimony justify beliefs without being supported by non-testimonial evidence? Non-reductionists think so.[13] Just as perceptual beliefs are justified without any inference, they argue, so are testimonial beliefs.

A way of stating (testimonial) non-reductionism is the following: you are justified in accepting some S’s testimony that p, if and only if:

- You receive their testimony that p, and

- p is undefeated.[14]

Testimonial justification is therefore fairly straightforward. Having someone’s testimony that p at least to some degree justifies one in believing p.[15]

An initial objection to non-reductionism is that it justifies gullibility (Fricker 1994). If non-reductionism involves a presumptive right to trust whatever you are told, all it takes to be justified in believing a proposition, after all, is for someone to tell you it is true. Granted, one must not have defeaters, but non-reductionism imposes no requirement that one be vigilant to the trustworthiness of testimony. Without such vigilance, available defeaters will be overlooked. However, a requirement that one monitor for trustworthiness would appear to invalidate the presumptive right to trust. Thus, non-reductionism licenses gullibility. The problem with Fricker’s objection, non-reductionists have replied, is that she seems to assume that monitoring must be to some degree conscious. However, they argue, there is no reason that monitoring could not be unconscious and automatic, from which it follows that one may still have a presumptive right to trust while not being gullible (Henderson and Goldberg 2006).

D. The Dialectic between Reductionism and Non-Reductionism

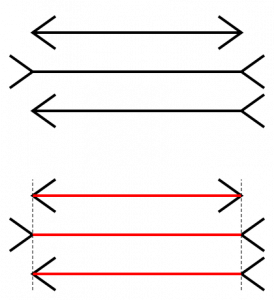

Non-reductionism suffers from two major theoretical disadvantages when compared to reductionism. Reductionism explains why it is that we are justified in relying on testimony in terms of a familiar sort of justification—(broadly) inductive justification. If reductionism is equally explanatorily powerful as non-reductionism, it looks like it will be a simpler theory, benefiting from Ockham’s razor. Furthermore, there is a phenomenalistic problem with non-reductionism. Other sources of justification share a compelling “presentation-as-true.” When we seem to perceive, introspect, remember, or intuit, it seems to us that it is true, even if we presently reject what seems to be true. Consider an optical illusion like the Müller-Lyer lines (Figure 1).

Sight presents the three lines to us as having different lengths, even if we believe—know, even—that they are the same lengths. Testimony seems to be different. It doesn’t “wear its truth on its sleeve” in the same way as other sources of justification. Even though we believe testimony “automatically” much of the time, it seems to be more like our conclusion that there is a fire when we see smoke. The best reply for the non-reductionist would be to offer an account of testimony as evidence which is both independently plausible and permissive enough to count testimony as a non-reducible form of evidence.[16]

Setting aside fundamental questions about evidence, the most powerful argument for non-reductionism is that reductionism cannot avoid skepticism, via either the NEEO or ICO.

Can the reductionist say anything in reply to these objections? The issue is too complicated to deal with at length here. In my own view, the most promising reply to the NEEO appeals to inference to the best explanation.[17] In brief, it may be that the reductionist can treat our experiences—especially of testimony, communication more generally, and other social interactions—as data, the best available explanation of which is that many individual cases of testimony are true. Such an approach to replying to the NEEO may also help in replying to the ICO if it could be argued that the very simple explanations available to young children justify believing testimony (Rowley 2016). Alternatively, the reductionist could argue that the reliability of testimony is tacitly confirmed while children learn that there are reliable associations between words, contexts of utterance, and truth (Shogenji 2006, 340).

Disagreement

Surprisingly, it is only in the last twenty years or so that philosophers have become especially interested in the epistemological significance of disagreement. Here, I will first say something about disagreement in general, laying out some basic concepts and highlighting an important role that disagreement plays in forming our views about the world. Then, I will point out a thorny philosophical problem that emerges.

The mere fact of disagreement is commonplace. If we interact with others, we encounter disagreement frequently. If you have any political beliefs at all, it will not be hard to find someone who disagrees. On the other hand, many of our disagreements arise and are resolved without much fanfare. Consider anything you’ve had to do in cooperation with someone else: a group project, fixing a car, or playing on a team. Different beliefs about goals, procedures, division of labor, and so forth, emerge and evolve over the course of collaboration. Some of these disagreements cause conflicts, some momentary, some lasting.

These disagreements offer us, as David Christensen puts it, “opportunities for epistemic self-improvement” (Christensen 2007, 187). We know we are fallible and limited in our first-hand evidence about the world. We value eyewitness accounts and expert opinion not only because they can inform us, but because they can correct us when we have a false belief. At least some of the time, the justified response to finding out someone disagrees with us is to adjust our beliefs to agree with the other person.

Of course, it isn’t always the case that we should abandon our belief that p when we find out that someone else believes p is false. If the best explanation of our difference of opinion is not that the other person has evidence I lack, but that they are ignorant, misinformed, biased, or mentally compromised (e.g., concussed, drunk, delirious, etc.), this probably does not justify my ceasing to believe as I do.[18]

The upshot is that sometimes disagreement provides us with evidence about how the world is. It does this by giving us evidence about the beliefs other people have about the world. This changes our own body of evidence and what is justified for us to believe.

Box 2 – The Evidence of Evidence Principle & Disagreement

The EEP (see Box 1) also contributes to our understanding of disagreement. The principle suggests that usually, finding out that an expert believes that p (where p is in their area of expertise) is strong evidence that p—stronger than any competing evidence a novice is likely to have. The reasonable thing for a novice is usually to agree with the expert. On the other hand, if we find out that a peer disagrees with us about p, we learn that a comparable body of evidence supporting their view about p (and not ours) likely exists. By the EEP, this is evidence for us about p—and usually partially or fully defeats our original evidence concerning p, justifying some degree of conciliation.

The importance of disagreement as a source of evidence is embodied in various practices in which we engage. Experts realize that second opinions can confirm or disconfirm our initial judgments. Academic peer review formalizes this check on our fallibility by risking the possibility that experts will disagree with the conclusions of authors of new work in their discipline. Another practice is creating “space” for disagreement. Where individuals are free and even encouraged to voice their disagreement, the group is less likely to fall into unjustified groupthink or other biases. This is one of the reasons there are usually academic freedom protections for students and faculty within universities. For similar reasons, the freedom to publicly disagree is usually legally protected in liberal democracies.

A. Peer Disagreement

Suppose we both have thermometers. Yours reads 30. Mine reads 70. What should we believe? If we add that we have carefully calibrated yours and found it very reliable, while mine isn’t, then it would seem that we should believe yours. But what if we have tested both and, until now, have found them to be equally reliable? It won’t do, in such a case, to appeal to our ownership of the thermometer—that is obviously irrelevant. If we have no other evidence about the thermometers or temperature (suppose you’re in a spacesuit and can’t feel the air for yourself), then it appears that we should suspend judgment about which thermometer is right.[19]

Now imagine that these thermometers are in our minds—or rather—are our minds. Suppose you believe p and I believe p is false. If we both know you’re more likely to be right—say we know you to be intelligent, have given a sober, fair-minded consideration of all of the evidence, and so on, while I have only given a cursory reading of one source of dubious value—then it seems I should treat your belief about p as something like testimony. I should change my view and adopt yours. But what if we are both epistemic peers about p, and we know it? In other words, suppose we know that we’re equally epistemically situated with respect to p, and therefore equally likely to have the truth. If the analogy with thermometers holds, then it looks like we are justified only in suspending judgment about p once we find out about the disagreement. Put another way, we should conciliate—adopting an attitude closer to our peer’s attitude than our initial attitude (Elga 2007).[20]

Conciliation seems like it explains the value of disagreement outlined above. Peer review is valuable because we find someone who is at least as likely to be right as we are and, if we find out that they disagree, we decrease our confidence and seek new evidence (or at least a reason to think that we aren’t peers after all).

However, there are a couple of things that should give us pause before simply agreeing that we should always conciliate when we find out that a peer disagrees with us.

The conciliatory response to peer disagreement—or conciliationism—seems to have serious skeptical consequences. Controversial beliefs are often central to our view of the world: they include political, religious, scientific, or philosophical beliefs. Yet for most of these beliefs, you either know about people who appear to be your peers or are experts with respect to these beliefs, and who disagree with you. Further, even if you don’t know of specific individuals who qualify, you are likely justified in believing that somewhere in the world, there is such a peer, if not an expert, who disagrees with you.[21]

This suggests grounds for a limited sort of skepticism—but not an insignificant one. Do you believe that a god exists? Do you believe that there isn’t one? Chances are that there is someone you know who is as fair-minded, intelligent, and acquainted with the relevant arguments as you are (if not more so). If you encounter such a peer, it would appear that you have a reason to abandon your own belief (or weaken it). After all, what non-arbitrary reason do you have to prefer your judgment to that of your peer? The upshot is that we may have some powerful reasons for skepticism about a whole range of controversial propositions—reasons to either significantly weaken our confidence or suspend judgment altogether about propositions which may be quite important to us.[22]

B. Resisting Skepticism

Are there ways of resisting this skeptical argument? We might call the (non-skeptical) view that sometimes (or frequently) it is justified to continue holding our original attitude in the face of peer disagreement in scenarios like the above the steadfast view.[23]

Objection 1 (to the skeptical argument): Maybe peers aren’t that common. If you and I are just as likely to be right about p and I know this, then it looks like I am being arbitrary in continuing to believe as I do about p when I find out that you disagree with me. But how often is it that we know (or are strongly justified) that we are just as likely to be right as someone else? Perhaps the skeptical consequences can be avoided because real, known epistemic peers are rare.[24]

This looks initially promising as a means of defending our controversial beliefs. There really aren’t that many ideal cases in which we and someone we disagree with are perfectly balanced in our disagreement about some p. However, a little more consideration shows that this response only goes so far. The more likely it is, on my evidence, that you and I are competent peers, the more evidence I get from finding out about your disagreement with me that your view is the correct one. Think of it this way: even if I justifiably believe my thermometer is more likely to be accurate than yours is, I cannot simply dismiss your thermometer’s reading. It is evidence. If it disagrees with my thermometer, it is some evidence that my thermometer is wrong. The closer your thermometer is in accuracy to mine, the stronger the evidence is that my thermometer is the one that is in error and the closer to suspending judgment I should come.

Objection 2 (to the skeptical argument): It might be thought that the argument for conciliation is self-defeating. Philosophers disagree about the correct response to peer disagreement. It looks like all an opponent of conciliation has to do to “win” the argument is continue to maintain their position. The “conciliationist” should follow their own advice and either come to agree with their opponent or continue to disagree with less confidence than before. If they still disagree after this initial conciliation, they should follow their advice again, and either agree with their opponent or become less confident than they were. Let this continue for a while and they will no longer be justified in believing conciliationism. So, it would appear that conciliationism defeats itself, so long as its opponent holds firm.

The main problem with this objection is that it doesn’t show that conciliationism is false. At best, it shows that it might be true and, yet, not justified for belief.[25] Additionally, if the non-conciliating “peer” cannot give a good account of why they are not also conciliating, this may undermine the evidence that the conciliationist has for conciliating in the first place.

Box 3 – Rational Uniqueness

Rational uniqueness (RU) is the principle that each body of evidence supports only one attitude toward a proposition. One way of defending a steadfast view would be to argue that RU is false. The alternative to RU is rational permissivism (RP). According to RP, two peers might justifiably disagree about p, because both of them have attitudes in the range the evidence permits.

RP has a curious consequence. It means that for a single body of evidence, both p and not-p could be justified for belief. Thus, one could truthfully say, “p, but my evidence equally supports not-p.” But that sounds altogether wrong to my ears. When the evidence equally positions one with respect to p and not-p, “I have no idea whether p” sounds much better. In that case, suspension of judgment is the unique justified attitude, in accordance with RU.[26]

It is also not clear that RP will do very much against the skeptical argument. The reason has to do with the range of attitudes permitted by a given body of evidence. A large range would allow two individuals to confidently believe p on the one hand, and not-p on the other. But such a large range is highly implausible. On the other hand, a narrow range might be plausible, but would do very little to turn aside the argument for conciliationist skepticism. Such a narrow range might leave both disputants only justified in maintaining a very weak belief that p or not-p.

It is telling that even those who disagree with conciliationism in theory agree in practice that knowing about peer disagreement often calls for a kind of epistemic humility. Knowing about peer disagreement should make us less confident in the accuracy of our initial judgment—it should exert some degree of “skeptical pressure” on our beliefs. In my own view, it is vitally important to be aware that we do not form our beliefs—even our most cherished and important ones—in a vacuum, epistemically insulated from other minds. We are ever more interconnected than we have ever been. Awareness of disagreement is a tonic for the ills of the digital echo chambers in which each side of a controversy seems oblivious to the other—because digital filters, not the quality of the evidence, give the appearance of agreement on every side. Anyone who can’t find a disagreeing peer (or someone near enough) for their most cherished political, philosophical, and religious beliefs—would do well to get out of the narrow confines of such epistemically limiting social circles. Engage, and it won’t take long to find them.

Questions for Reflection

- How does the term “testimony” as used in epistemology differ from its usage in everyday life?

- A widespread attitude is that there is a large class of testimony that we should be disposed to dismiss (e.g., gossip, rumors, tabloid headlines, and conspiracy theories). Given that testimony is an important source of our justification and knowledge, how might this attitude be defended? Is there something different about this class of testimony as opposed to, say, believing the average stranger on the street whom you ask for directions?

- There is a strong emphasis in liberal, democratic societies on learning to think for ourselves and formulating our own beliefs. Moreover, such epistemic autonomy is protected by a “right to our own opinions.” Would this be a good argument against epistemically relying on testimony? How might one respond to the argument?

- Is reductionism or non-reductionism the more plausible theory of testimonial justification? Why?

- Not all apparent disagreements are genuine. Sometimes it appears that two people disagree merely because they are “talking past” one another. Their differences are “merely verbal.” Can you think of an example where you have experienced this yourself? What, if any, are the epistemic ramifications of this phenomenon?

- How does an “epistemic peer” differ from a “peer” in the ordinary sense of the term? Given an average intro philosophy class, determine whether each usage of the term plausibly applies to all classmates with respect to issues in epistemology, politics, science, and so on.

- Most scientists agree that human-induced climate change is both real and urgent. Consider a non-expert climate skeptic who encounters this agreement. On the basis of your views about the epistemology of testimony, disagreement, and the like, what is the appropriate epistemic stance for this person upon such an encounter?

- Does conciliationism really require repeated conciliation with apparent peers who refuse to conciliate? Perhaps conciliation can be construed so that after the first instance, staying put is a way to sustain the conciliation one has already accomplished. No further adjustment is required. Is this a plausible view? If so, would it be another way of stopping the slippery slope into skepticism?

- Consider two people who “agree to disagree” on some matter. Is it possible, given conciliationism, for them to recognize each other as reasonable peers?

Further Reading

Social Epistemology

Goldman, Alvin, and Cailin O’Connor. 2019. “Social Epistemology.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta. https://plato.stanford.edu/archives/fall2019/entries/epistemology-social/.

Goldman, Alvin, and Dennis Whitcomb, eds. 2011. Social Epistemology: Essential Readings. New York: Oxford University Press.

Testimony

Adler, Jonathan. 2017. “Epistemological Problems of Testimony.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta. https://plato.stanford.edu/archives/win2017/entries/testimony-episprob/.

Gelfert, Axel. 2014. A Critical Introduction to Testimony. New York: Bloomsbury Academic.

Green, Christopher. “Epistemology of Testimony.” In The Internet Encyclopedia of Philosophy. https://www.iep.utm.edu/ep-testi/.

Lackey, Jennifer, and Ernest Sosa, eds. 2006. The Epistemology of Testimony. New York: Oxford University Press.

Disagreement

Christensen, David, and Jennifer Lackey, eds. 2016. The Epistemology of Disagreement: New Essays. New York: Oxford University Press.

Feldman, Richard, and Ted A. Warfield, eds. 2010. Disagreement. New York: Oxford University Press.

Matheson, Jonathan, and Brian Frances. 2018. “Disagreement.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta. https://plato.stanford.edu/archives/win2019/entries/disagreement/.

References

Anscombe, G. E. M. (1979) 2008. “What is it to Believe Someone?” In Faith in a Hard Ground: Essays in Religion, Philosophy and Ethics, edited by Mary Geach and Luke Gormally, 1–10. Charlottesville, SC: Imprint Academic.

Burge, Tyler. 1993. “Content Preservation.” The Philosophical Review 102: 457–88.

Carey, Brandon. 2011. “Possible Disagreements and Defeat.” Philosophical Studies 155 (3): 371–81.

Christensen, David. 2007. “Epistemology of Disagreement: The Good News.” Philosophical Review 116: 187–217.

Coady, C. A. J. 1992. Testimony: A Philosophical Study. New York: Oxford University Press.

Elga, Adam. 2007. “How to Disagree about How to Disagree.” In Disagreement, edited by Ted Warfield and Richard Feldman, 175–86. New York: Oxford University Press.

Fricker, Elizabeth. 1994. “Against Gullibility.” In Knowing from Words: Western and Indian Philosophical Analysis of Understanding and Testimony, edited by Bimal K. Matilal and Arindam Chakrabarti, 125–61. Dordrecht: Kluwer Academic.

———. 2017. “Inference to the Best Explanation and the Receipt of Testimony: Testimonial Reductionism Vindicated.” In Best Explanations: New Essays on Inference to the Best Explanation, edited by Kevin McCain and Ted Poston, 250–81. New York: Oxford University Press.

Goldberg, Sanford. 2008. “Testimonial Knowledge in Early Childhood, Revisited.” Philosophy and Phenomenological Research 76 (1): 1–36.

Goldman, Alvin, and Cailin O’Connor. 2019. “Social Epistemology.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta. https://plato.stanford.edu/archives/fall2019/entries/epistemology-social/.

Graham, Peter J. 2006. “Liberal Fundamentalism and Its Rivals.” In The Epistemology of Testimony, edited by Jennifer Lackey and Ernest Sosa, 93–115. New York: Oxford University Press.

Greco, John. 2012. “Recent Work on Testimonial Knowledge.” American Philosophical Quarterly 49 (1): 15–28. http://www.jstor.org/stable/23212646.

Henderson, David, and Sanford Goldberg. 2006. “Monitoring and Anti-Reductionism in the Epistemology of Testimony.” Philosophy and Phenomenological Research 72 (3): 600–17.

Huemer, Michael. 2011. “Epistemological Egoism and Agent-Centered Norms.” In Evidentialism and its Discontents, edited by Trent Dougherty, 17–33. New York: Oxford University Press.

Hume, David. (1777) 1993. An Enquiry Concerning Human Understanding. Edited by Eric Steinberg. 2nd ed. Indianapolis, Indiana: Hackett.

Kelly, Thomas. 2005. “The Epistemic Significance of Disagreement.” In Oxford Studies in Epistemology (Vol. 1), edited by Tamar Szabo Gendler and John Hawthorne, 167–96. Oxford: Oxford University Press.

———. 2010. “Peer Disagreement and Higher Order Evidence.” In Disagreement, edited by Richard Feldman and Ted Warfield, 183–217. New York: Oxford University Press.

King, Nathan L. 2012. “A Good Peer is Hard to Find.” Philosophy and Phenomenological Research 85 (2): 249–72.

Lackey, Jennifer. 2005. “Testimony and the Infant/Child Objection.” Philosophical Studies 126: 163–90.

———. 2006. “The Nature of Testimony.” Pacific Philosophical Quarterly 87: 177–97.

———. 2010. “What Should We Do When We Disagree?” In Oxford Studies in Epistemology (Vol. 3), edited by Tamar Szabo Gendler and John Hawthorne, 274–93. Oxford: Oxford University Press.

———, ed. 2014. Essays in Collective Epistemology. Oxford: Oxford University Press.

Lyons, Jack. 1997. “Testimony, Induction and Folk Psychology.” Australasian Journal of Philosophy 75 (2): 163–78.

Malmgren, Anna-Sara. 2006. “Is There a Priori Knowledge by Testimony?” The Philosophical Review 115 (2): 199–241.

Matheson, Jonathan, and Brian Frances. 2018. “Disagreement.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta. https://plato.stanford.edu/archives/win2019/entries/disagreement/.

Matilal, Bimal K., and Arindam Chakrabarti, eds. 1994. Knowing from Words: Western and Indian Philosophical Analysis of Understanding and Testimony. Dordrecht: Kluwer.

Phillips, Stephen. 2019. “Epistemology in Classical Indian Philosophy.” The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta. https://plato.stanford.edu/archives/spr2019/entries/epistemology-india/.

Plato. (ca. 390 BCE) 2009. Apology. Translated by Benjamin Jowett. The Internet Classics Archive. http://classics.mit.edu/Plato/apology.html.

Proctor, Robert, and Londa L. Schiebinger. 2008. Agnotology: The Making and Unmaking of Ignorance. Stanford: Stanford University Press.

Reid, Thomas. (1764) 2000. An Inquiry into the Human Mind on the Principles of Common Sense: A Critical Edition, edited by Derek R. Brooks. Penn State University Press.

Rowley, William D. 2012. “Evidence of Evidence and Testimonial Reductionism.” Episteme 9: 377–91. https://doi.org/10.1017/epi.2012.25.

———. 2016. “An Evidentialist Epistemology of Testimony.” PhD diss., University of Rochester.

Shogenji, Tomoji. 2006. “A Defense of Reductionism about Testimonial Justification of Beliefs.” Noûs 40 (2): 331–46.

Weiner, Matthew. 2003. “Accepting Testimony.” Philosophical Quarterly 53: 256–64.

White, Roger. 2009. “On Treating Oneself and Others as Thermometers.” Episteme 6 (3): 233–50.

- I am making the common assumption that to know that p, one must be justified in believing p. See Chapter 1 of this volume by Brian C. Barnett. ↵

- Some include the analysis of group belief itself under the heading of SE (Goldman and O’Connor 2019). While related to SE, I would classify the analysis of group belief as a topic in metaphysics. ↵

- See Lackey (2014). ↵

- See Proctor and Schiebinger (2008). ↵

- For those interested in epistemic injustice, see Chapter 8 of this volume by Monica C. Poole. ↵

- See Goldman and O’Connor (2019). ↵

- For example, Socrates’s (ca. 469–399 BCE) criticism of Greek religion was one of the reasons why he was tried by the Athenians (Plato [ca. 390 BCE] 2009). ↵

- For example, the epistemological work of René Descartes (1596–1650) and John Locke (1632–1704) can both be seen as responses to widespread disagreement about the foundations of religious belief. For an overview of Cartesian and Lockean epistemology, see Chapter 3 of this volume by K. S. Sangeetha. ↵

- For an overview, see Phillips (2019) and Matilal and Chakrabarti (1994). ↵

- For an early and accessible version of this argument, see Anscombe ([1979] 2008). For a more recent and influential version, see Coady (1992), especially Chapter 4 of that work. ↵

- See Rowley (2012). ↵

- See Lackey (2005) and Goldberg (2008). ↵

- Some representative proponents of versions of non-reductionism include Reid (1764), Coady (1992), Weiner (2003), Graham (2006), and Goldberg (2008). ↵

- It should be noted that there is a lot of variation in the way the terms “reductionist” and “non-reductionist” are used in philosophy. Recognizing this, I have opted for a simplified formulation of both views. A constructive discussion of formulations of reductionism and non-reductionism can be found in Greco (2012). ↵

- I say “some degree” because one can have some justification for believing p without having on-balance justification for believing p. A non-reductionist might hold that undefeated testimony that p is true is always some reason to believe p, even if it need not be sufficient evidence to believe p. A view of this kind is proposed by Graham (2006). For simplicity, however, I will be treating non-reductionism as though it provides sufficient justification for belief in the absence of any defeaters. ↵

- See Burge (1993) and Chapter 9 in Coady (1992) for examples of such attempts. A critical discussion can be found in Malmgren (2006). ↵

- For a defense of reductionism that makes use of inference to the best explanation, see Lyons (1997) and Fricker (2017). For a criticism of this kind of defense, see Malmgren (2006). For discussion of the role of inference to the best explanation in epistemic justification, see Chapter 2 of this volume by Todd R. Long. For discussion of the relationship between best explanation and probability, see Chapter 6 by Jonathan Lopez. ↵

- It might be thought that the epistemology of disagreement simply is a sub-issue within the epistemology of testimony. However, while testimony often is evidence that someone disagrees with us, not all evidence from disagreement is testimonial. I can infer that we disagree by watching your behavior. Suppose I ate the last cookies in the jar and, therefore, believe the cookie jar is empty. When I watch you approach the jar as if to open it, I may have evidence that you believe it is not empty. ↵

- For a discussion of the thermometer analogy, see White (2009). ↵

- Also see Matheson and Frances (2018) for a further discussion of conciliation and steadfast responses to disagreement. ↵

- For a discussion of the potential skeptical force of merely possible (rather than actual) disagreement, see Carey (2011). ↵

- For those interested in traditional skeptical arguments, see Chapter 4 of this volume by Daniel Massey. ↵

- Note that the truth of the steadfast view may not be enough to avoid skepticism. It won’t be enough that sometimes we are justified in maintaining our original view in the face of disagreement. We must be justified in doing so in many of those real-world cases in which scientific, political, philosophical, and religious beliefs are threatened by conciliation. Defenders of the steadfast view include Kelly (2005), Kelly (2010), Lackey (2010), and Huemer (2011). ↵

- For a discussion, see King (2012). ↵

- Recall that truth and justification can diverge assuming fallibilism. See Chapter 1, Chapter 2, Chapter 4, and Chapter 6 of this volume for discussion of this point. ↵

- See Box 1 in Chapter 2 of this volume for another argument for suspension of judgment in cases in which the evidence equally favors p and not-p. ↵

The study of how social relationships and interactions affect the epistemic properties of individuals and groups.

Any utterance (e.g., speaking, writing, signing, etc.) by which the actor intends to communicate that proposition p is true.

The study of the epistemic properties of groups and their beliefs.

The study of ignorance, especially when ignorance is caused or influenced by groups who have an interest in that ignorance.

Wrongdoing related to knowledge. This includes individual interpersonal interactions that demonstrate injustice, as well as larger structures of inequity in knowledge distribution or knowledge production sustained in institutions such as the legal system, medicine, and education.

The view that the aim of democracy is (in part) to favor a true outcome (with voting answering a question such as, “which candidate is best to lead?”).

The view that some person S1 is justified in believing some S2’s testimony that p, if and only if, (a) S1 receives S2’s testimony that p, (b) S1 has inductive evidence based on observation that S2’s testimony that p is reliable, and (c) p is not defeated by other evidence S1 has.

Roughly, the principle that, whenever some person S1 has some evidence that S2 has some evidence in support of p, then S1 has some evidence in support of p.

An objection to (testimonial) reductionism. If reductionism is true, then, in order to avoid testimonial skepticism, we must have enough testimony-independent evidence to justify many of our testimonial beliefs. But we don’t have enough evidence and we know testimonial skepticism is false. Thus, reductionism is false.

An objection to (testimonial) reductionism. If reductionism is true, then young children are too cognitively unsophisticated to have testimonially justified beliefs. But it is obvious that young children have testimonially justified beliefs. Thus, reductionism is false.

The view that sometimes someone S is justified in believing some testimony p, but S lacks testimony-independent evidence that the testimony is reliable.

The methodological principle which maintains that given two competing hypotheses, the simpler hypothesis is the more probable (all else being equal). As the “razor” suggests, we should “shave off” any unnecessary elements in an explanation (“Entities should not be multiplied beyond necessity”). The principle is named after the medieval Christian philosopher/theologian William of Ockham (ca. 1285–1347). Other names for the principle include “the principle of simplicity,” “the principle of parsimony,” and “the principle of lightness” (as it is known in Indian philosophy).

Epistemic peers with respect to a proposition p are equally likely to believe the truth about p (i.e., each is just as unbiased, intelligent, sober, well-informed, etc.).

In a disagreement about a proposition p, a person S1 conciliates when S1 changes their attitude toward p in the direction of S2’s attitude toward p.

The view that whenever one discovers that an epistemic peer disagrees about some proposition p, one is justified in conciliating. So, for example, if S1 confidently believes p and discovers that their peer S2 believes p is false with the same degree of confidence, then S1 will be justified in decreasing their confidence about p.

The view that sometimes, when one finds out that a peer disagrees, one is justified in retaining one’s original doxastic attitude.

The principle that a body of evidence supports at most one attitude toward any proposition. RU denies rational permissivism (RP).

The principle that a body of evidence can support a range of attitudes toward a given proposition. RP denies rational uniqueness (RU).